As it turns out, the hardware that makes up these extraordinary machines does indeed look a lot like that, though nowadays it is far less twinkly.

“In the 1980s and 1990s, we were much better at flashing lights”, says Professor Mark Parsons, the University of Edinburgh’s Personal Chair in High Performance Computing, and the Director of its supercomputing centre, EPCC.

“Some older supercomputers had large numbers of beautiful lights and, if you knew your stuff, you could tell what programme was running based solely on the pattern they made. These days, supercomputers tend to be imposing black boxes.”

Professor Parsons has just taken delivery of one such box.

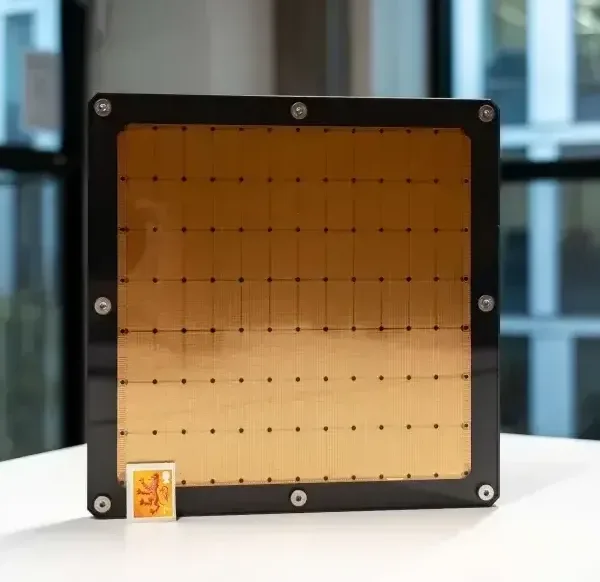

In March 2021 the University installed a Cerebras CS-1, a next generation AI supercomputer which will allow academics to perform deep learning and machine learning at a scale unseen anywhere else in Europe. It opens up new possibilities in areas such as natural language processing and the data mining of ancient texts.

Evolution of the specifics

Standing on the edge of this next phase of supercomputing at Edinburgh, Professor Parsons is a knowledgeable guide for how we got from the clunky machines of the last century to the arrival of the Cerebras system.

The sleek, modern supercomputers found in facilities like EPCC are on an altogether different scale to the first, hand-built systems developed in the 1960s and 1970s. While they are also worlds away in terms of performance – often billions of times more powerful than those first supercomputers – they exist for largely the same purpose: to help scientists create models and simulations to study things that are difficult to measure physically.

“If you look at the history of science, all the way up to the middle of the last century, we really focused on theory and experiment. But from the middle of the twentieth century onwards – partly because of work that came out of the Second World War – people began using computers more and more to model the world around them.”

Professor Parsons continues: “In general terms, modelling simulations are used for things that are too slow, too fast, too big or too small to study in the lab. For instance, you can’t create a simple laboratory model of a glacier melting or of the Earth’s tectonic plates moving; you need to model them using a computer simulation.”

Performing such complex modelling requires huge amounts of computing power, which is essentially why supercomputers are so large. All of that number-crunching power comes from computer processors – or cores – that perform billions of calculations every second. Rapid advances in microelectronics have meant that the number of cores you can pack into a supercomputer, and their performance, has increased dramatically over the past few decades.

Core strength

While the fastest supercomputers around in the early 2000s contained a few thousand cores, one of EPCC’s newest systems, ARCHER2 – which is the UK’s primary academic research supercomputer – has almost 750,000. That makes it 11 times more powerful than its predecessor, ARCHER, which had a comparatively paltry 120,000 cores and only began operating in 2013.

All of that hardware gives ARCHER2 the equivalent computing power of a quarter of a million modern laptops. It also makes it extremely heavy – each of its 23 cabinets weigh several tonnes – which meant the floor the system rests on had to be reinforced before it was installed.

Though the University’s involvement in high-performance computing dates back to the 1980s, EPCC was established in 1990 to act as a focal point for work at Edinburgh. In the 30 years since, EPCC has established itself as an international centre for excellence in supercomputing and, as well as ARCHER2, hosts several national high-performance systems and data stores.

The incredible pace of progress in the field means the next big leap forward is never too far away. As Professor Parsons explains, it is an exciting time for supercomputing.

“Systems like ARCHER and ARCHER2 are what are known as petascale supercomputers, which means they are able to perform one million billion calculations per second,” he says “As impressive as that is, the next generation of supercomputers – which we call exascale systems – are one thousand times faster. They will have in the region of five to ten million cores, which will enable them to do one billion billion calculations every second. Work is already underway at Edinburgh that will make it one of the few places in Europe that is able to host an exascale computer.”

Quantum leap

However, as the scale and power of supercomputers has expanded, so have their running costs, which is where the concept of quantum computing comes in.

Professor Parsons explains: “We realised that we couldn’t just keep building bigger and bigger systems, because the power requirements are becoming very large. The beauty of quantum computing systems is that, in theory, they can solve large problems using very small amounts of power.”

The idea of using the properties of quantum mechanics to calculate things emerged in the late 1970s, but Professor Parsons says the challenge today is that we still only have the technology to make very small quantum computers. At the moment you can only solve trivial problems with them.

Companies like Microsoft, IBM and Google are spending huge amounts of money on quantum computing, and EPCC is itself providing supercomputing services for groups trying to model and develop the technology, but a great deal more work is required to make it feasible.

“The future almost certainly is quantum computing, however it’s very unclear how long that will take. There’s a lot of hype at the moment, but developing the technology that’s required is a real struggle,” says Professor Parsons.

Along with the blinking lights, the fleets of whirring fans that once stopped the fastest supercomputers overheating have made way for newer, water-cooled systems. With developments continuing at such a relentless pace, it undoubtedly won’t be long until the next generation of supercomputers are switched on inside EPCC and centres like it.

Image credit: ARCHER Angus Blackburn, ARCHER2 & Prof Parsons EPSRC