It’s no secret that artificial intelligence (AI) is evolving at a mind-boggling pace. Specialist computer programme companies around the world, such as Google and its subsidiary DeepMind, are creating systems that are already being trained to outperform humans in certain tasks and games, for example.

However, while breakthroughs with computer systems that focus on learning single tasks particularly well are starting to be achieved, developing systems that can transfer the skills learned on one task to learn how to do another task is a greater challenge.

The truth about machine learning

It’s what Dr Doumas, Senior Lecturer in the School of Philosophy, Psychology and Language Sciences at Edinburgh, describes as one of the “dirty little secrets” in the machine learning community: “These systems, while spectacular at single tasks, are terrible at generalisation between tasks.”

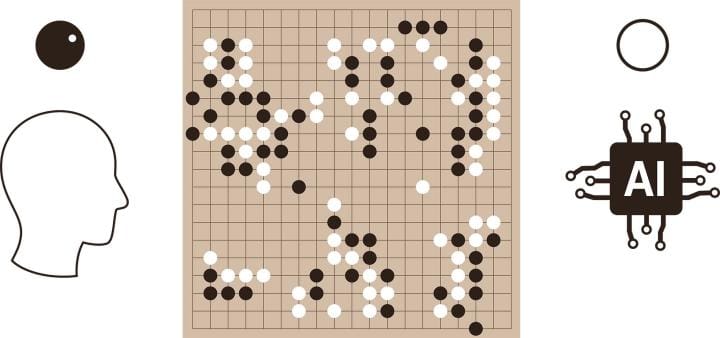

What does that mean, exactly? “So, while a machine system might learn to play one game better than any human, that system will perform no better than a completely untrained system when trying to play a related game,” he explains. “For example, the model that learned to play chess was no better than a completely untrained network when trying to play Go, and a machine trained to play a video game like Breakout was completely unable to transfer that learning to a related game like Pong.”

Breakout and Pong are both hugely popular computer games with a paddle and ball format released by Atari Incorporated in the 1970s. Go is a two-player board game involving stones used to surround an opponent’s territory on a grid-marked board. It originated more than 2,500 years ago in China and has been developed in recent times to be played digitally.

How computers and humans learn

For years programmers have been working on systems that can beat the world’s best human opponents in these and similar games, more recently with increasing success. However, once the system has learned to play one of these games, what next? For humans, the ability to apply what we have learned elsewhere is an intrinsic part of our intelligence. However, AI systems aren’t as sophisticated.

“Unlike statistical machine learning algorithms, people are exceptional generalisers,” Dr Doumas continues. “We routinely use what we know about one domain to reason about another. When we learn a concept like ‘more’ we can apply it to anything from money, to bales of hay, to patience. We learn mathematics in school before we learn physics precisely because we apply what we have learned in mathematics to help us understand physics. When we learn to play one video game, we get better at playing related video games for free.”

Professor of Cognitive Psychology at the University of Illinois, John Hummel, who served as Dr Doumas’s PhD advisor at the University of California, Los Angeles (UCLA), in the United States in 2005, and has collaborated with him intermittently since, adds: “And when we are done playing the game, we drive ourselves home, kiss our children, and make ourselves dinner. When the world’s best [statistical machine learning algorithm] Go player is done with a game, it simply shuts down because there is literally nothing else it can do.”

However, Dr Doumas and Professor Hummel’s research explored creating a system with the potential to change this.

“I was working with John Hummel and Keith Holyoak in a lab interested in how humans reasoned by making analogies. John and Keith had developed a computational model called LISA that did a really good job modelling how people make analogies and use those analogies to reason,” Dr Doumas explains.

“Like all successful analogy models, LISA used structured, or symbolic, representations. There’s a lot of really good evidence to suggest systems cannot make human level analogies without these kinds of representations,” he continues. “Unlike other symbolic models, though, LISA was a neural network, that explained how the symbolic processes underlying relational reasoning could be realised in a neural computing architecture, such as the human brain. However, like all other symbolic models at the time, LISA did not provide an account of where the symbolic representations it relied on came from in the first place.”

Introducing DORA

As he worked towards his PhD in Cognitive and Developmental Psychology at UCLA, Dr Doumas developed a thesis that sought to solve this problem. The result was DORA (Discovery Of Relations by Analogy) – a computational model that could learn structured (or symbolic) representations from non-structured inputs.

After gaining his PhD, Dr Doumas worked as a postdoctoral researcher at Indiana University and then as an Assistant Professor at the University of Hawaii. During this time his research continued and has evolved further since joining the University of Edinburgh as a Lecturer in 2013.

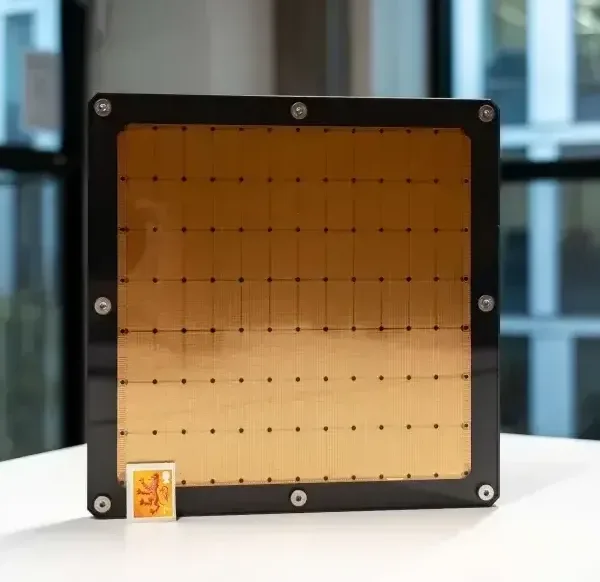

“During my research career I’ve worked on advancing the DORA theory, trying to get symbolic representations from simpler and simpler starting states,” he says. “The current work represents our best success to date – learning from simple visual images, or pixel data, and tests our idea that human generalisation is basically just a process of making more and more complex analogies using these representations.”

Collaboration is key

Dr Doumas expanded his research team and was joined by Guillermo Puebla in 2016 who, as a PhD student at Edinburgh, helped progress DORA.

“Humans often generalise what they have learned about one situation to a very different one based on patterns of relations between entities,” says Dr Puebla. “This allows us, for example, to apply what we have learnt about solving for x in an algebraic equation to estimate the amount of wood needed to build a roof. The dominant approach to AI these days, deep neural networks, famously lack on this aspect of human cognition.”

As part of his PhD research Dr Puebla made advances in this area by leading the design of reinforcement learning simulations with DORA. Dr Doumas has also had a long standing collaboration with Dr Andrea Martin, Lecturer in Psychology at Edinburgh from 2012 to 2017 and now leader of a research group at Max Planck Institute for Psycholinguistics, who conceptualised the original project with Dr Doumas, and designed supplemental human experiments and ran human participants based on DORA predictions.

Dr Martin explains how DORA differs from current systems: “Our system learns relational structure like ‘above’ from a well-known set of tagged photo-realistic images of coloured shapes in different arrangements, like a red pyramid on top of a blue cube. Then it can use that representation of ‘above’ to move the paddle in Pong or Breakout such that the ball is always above the paddle. Other systems do not learn structures like ‘above’ in a way that can be applied to anything other than the objects and contexts they have been trained on.”

The team’s research has been collated into a paper published in the journal Psychological Review in February 2022. It shows that the capacity of the system to learn structured representations and make analogies using these representations could “really jump start cross-domain generalisation” as Dr Doumas explains: “For example, like humans but unlike current deep neural networks, DORA can play a video game such as Pong immediately after learning to play a game like Breakout, or perform a psychological task like solving analogy problems after experience with unrelated domains with no additional training, so called zero-shot learning.”

“DORA’s success comes because it uses the representations it learns from one context to understand a new situation, like playing a novel video game,” he continues. “It uses these representations to make analogies between the two situations – like discovering the fact that both Breakout and Pong involve moving a paddle to hit a ball – and then exploits these analogies to generalise strategies successful in one situation – like following the ball with the paddle – to the other almost immediately.”

Jump starting the next phase

New areas for exploration have already begun. Surprised at how “brittle” current methods for performing reinforcement learning with symbolic representations are, Dr Doumas and Dr Puebla, who is due to complete his PhD at Edinburgh very soon and is now a postdoctoral researcher at the University of Bristol, developed a more effective method as part of Dr Puebla’s PhD thesis. The work has resulted in another paper being recently submitted for publication.

“In a new preprint we have shown that our model is capable to learn to play several Atari games using only relational information,” explains Dr Puebla. “We hope that our approach leads to a model that can learn relational rules to behave effectively in large and complex environments.”

While new models are being created, work on the DORA model also continues thanks to the next generation of PhD students at Edinburgh. The system has been extended beyond learning spatial relations such as ‘above’ to more complex relations such as ‘supports’ and ‘surrounded by’ as Dr Doumas explains: “My student Ekaterina Shurkova has integrated the DORA model in a pipeline that performs visual reasoning using constraints from what we know about human psychology. The model currently outperforms leading statistical learning approaches on a variety of visual reasoning tasks as well.”

In the right hands

Many feel that developments in AI open up a world of possibilities that should be embraced, while others are more reticent about the prospect of the rise of the machines. Progress in developing these new systems is incredibly impressive, yet it raises questions about what they could be used for. So, are there any concerns about creating AI models that learn more like humans?

“Current AI doesn’t scare me as much as it scares others,” says Dr Doumas. “While any given statistical learning machine can be exceptional at a given task, the properties of the approach basically guarantee that they won’t be good at much else. But humans have complementary strengths. Moving towards human-level AI brings with it the potential for greater and more dangerous misuse.”

Professor Hummel shares a hypothetical example of how a more human, smarter type of AI could employ its new-found skills: “A statistical Go player wins by memorising every possible response to every possible board configuration and every possible outcome thereof. A smart Go player wins by figuring out which city houses the computer running its opponent and cutting power to that city. The algorithms described in our paper could be used to create a smart Go player.”

While there is always the risk of misuse, Dr Doumas is optimistic about using the system for good. One of many real-world applications could include the enhancement of the teaching and learning experience for future students: “For example, if we develop a system that learns like people do, we can test all kinds of educational curricula on that system and see what works best. We can use this information to help us design effective educational interventions.”

Incorporating our knowledge of how humans learn when designing machine learning systems can have true benefits for society if handled wisely. For Dr Doumas, this could lead to a future where a mutually beneficial relationship between human and artificial intelligence exists: “The impact of our work is likely a little far off, but just as understanding people can help us develop better computational systems, once we develop these systems, we can use them to help us understand human minds.”

Images: Google/Getty 2016; Sean Gallup/2011 Getty Images; Hakule/iStock/Getty images plus.